| << Chapter < Page | Chapter >> Page > |

Most of the discussion about the approximate solutions to are about the result of minimizing the equation error and/or the norm of the solution because in some cases that can be done by analytic formulas and also because the norm has a energy interpretation. However, both the and the [link] have well known applications that are important [link] , [link] and the more general error is remarkably flexible [link] , [link] . Donoho has shown [link] that optimization gives essentially the same sparsity as the true sparsity measure in .

In some cases, one uses a different norm for the minimization of the equation error than the one for minimization of the solution norm. And inother cases, one minimizes a weighted error to emphasize some equations relative to others [link] . A modification allows minimizing according to one norm for one set of equations and another for a different set. A more generalerror measure than a norm can be used which used a polynomial error [link] which does not satisfy the scaling requirement of a norm, but is convex. One could even use theso-called norm for which is not even convex but is an interesting tool for obtaining sparse solutions.

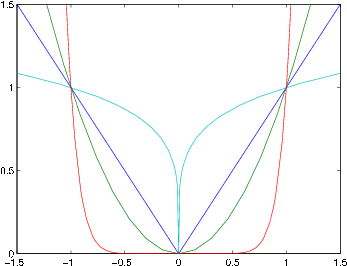

Note from the figure how the norm puts a large penalty on large errors. This gives a Chebyshev-like solution. The norm puts a large penalty on small errors making them tend to zero. This (and the norm) give a sparse solution.

The IRLS (iterative reweighted least squares) algorithm allows an iterative algorithm to be built from the analytical solutions of the weighted least squareswith an iterative reweighting to converge to the optimal approximation [link] .

If one poses the approximation problem in solving an overdetermined set of equations (case 2 from Chapter 3), it comes from defining the equation error vector

and minimizing the p-norm

or

neither of which can we minimize easily. However, we do have formulas [link] to find the minimum of the weighted squared error

one of which is derived in [link] , equation [link] and is

where is a diagonal matrix of the error weights, . From this, we propose the iterative reweighted least squared (IRLS) error algorithmwhich starts with unity weighting, , solves for an initial with [link] , calculates a new error from [link] , which is then used to set a new weighting matrix

to be used in the next iteration of [link] . Using this, we find a new solution and repeat until convergence (if it happens!).

This core idea has been repeatedly proposed and developed in different application areas over the past 50 years with a variety of success [link] . Used in this basic form, it reliably converges for . In 1990, a modification was made to partially update the solution each iteration with

Notification Switch

Would you like to follow the 'Basic vector space methods in signal and systems theory' conversation and receive update notifications?